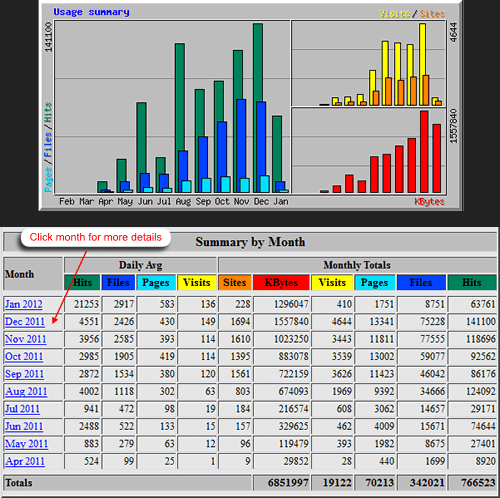

Webmasters mostly prefer to use page-based tracking over log files analysis, as the former is said to be 30% more accurate. There are two reasons behind this accuracy – (i) caching, and (ii) robots and spiders.

Caching: In general, this practice is mere saving a web page that you request or search for. Everyone – be it your internet service provider, website hosting companies as well as your own network and browser will cache copies of web pages you are browsing. This helps them in reducing the traffic amount in their individual networks. By caching, you browser can check your computer first to see if the pages are saved in its local cache, rather than going out on to the network. This affects the accuracy of log file analysis.

Log files, which are mainly technical resources, are used to keep record every time you asked the web server to do something. The main purpose of these log files is to help the server administrators in managing the technology. Thus, it will only record the pages that are requested from the web server directly and will not save the records where cached pages are being displayed. And you will never know if any visitor is seeing just the cached copy of your web page.

However, it does not affect page-based tracking. By using a special technology called cache busting, the page-based tracking system ensures that it records every time a visitor has viewed a page, even if it is cached. In simple terms, you can record each and every time your pages being loaded to a browser with a page-based tracking system. The report provided by web server log-analysis is usually less by around 30%, whereas page-based tracking systems give a far more accurate report about your website. As a result, page-based tracking systems like Google Analytics are widely used and trusted by webmasters.

Spiders and robots: The non-human activity such as spiders and robots form a large percentage of Internet traffic. And they can distort the website analysis of any site.

As you probably know, spider is a program created by search engines to visit sites and read their web pages and other information for creating index for search engine index. Robots, on the other hand, can be defined as any program that visits your site for various purposes. This non-human activity is a mere noise from marketing analysis perspective. The activity of robots and spiders affects your website in a way that they distort the information about what the “real-human” visitors are doing on your website. This, in turn, will prevent you from getting the real picture of your website activity.

The best solution is to ignore this activity as much as it is possible. Filter out this activity to get a more accurate picture of your website analysis. A page-based tracking system omits the recording of around 90% of this activity. Thus, you can have a much clearer picture of what people are doing on your site.

0 Comments