Website Optimization Tips – How to optimize your website

After latest Google Panda, Hummingbird & Penguin 2.1, here is a list of 8 awesome tips for the new age SEO & digital marketing. Use these 8 SEO tips to boost your search ranking for your website in long run & stay Google safe.

8 Great SEO Tips for Better Ranking

- Use long form of Deep Content 5000-10,000 Words with proper table of content.

- Use schema.org rich snippets /micro-formats for reviews, ratings, products, items, locations, people, businesses, events & more.

- Use Google places / Google My Business for faster indexing & better search result/rank.

- Use Google authorship & publisher markup for your website for more author rank & site authority.

- Use environmental linking within your content, both internal and external to your site, to create a more natural looking link profile.

- Add Facebook opengraph, Twitter meta card, Google+ integration & other social meta data tags to your website.

- Use disavow link tool wisely to remove really low quality backlinks. Monitor Google & Bing webmaster tools regularly.

- Focus only on related & high quality backlinks. Join social conversation. Focus more on engagement & content sharing.

- [Bonus Tip] A faster loading & mobile responsive website helps in enhanced user-experience, longer time on site, lower bounce rate & more engagement. Both Google and your users will reward you for increasing your site’s performance.

- [Bonus Tip] Merge similar type of old content of your website or blog into a cornerstone page or post.

Related reading: How to write killer SEO content

4 Fundamental SEO Techniques Would Click For Your Site Ranking

Learn about 4 fundamental SEO techniques that would click for your website ranking better in Google and other search engines. SEO tips & tricks.

For any online business, it is necessary for you to use proper search engine optimization techniques to improve your website rank in search results of popular search engines. Basically, a search engine is a program made to search data related to certain phase or keyword. The work of search engine is to display results related to the searched keyword. You online business must possess higher ranking in the search results of popular search engines such as Google, Bing, or Yahoo.

High ranking in search engine is a significant factor for the success of any online campaign or business. It your want your website to be visited by several new visitors; you must have to achieve place in the top results of popular search engines. According to a study, almost 90% of internet users take necessary information from the websites possessing top positions in search results.

Thus, it is necessary for you to increase your website ranking for the success of your online business. To achieve this objective, there are several SEO techniques that can help you to improve your website ranking. SEO techniques are basically aims at improving your website ranking with certain process and methods.

Here are best four SEO tips that can help you to improve your site ranking:

1. Choosing Right Keywords:

Selection of right keyword is the prime step of SEO for improving website ranking. Choosing proper keywords is a significant step for the success of your business as it will ultimately affect search ability of your website. You must pick up keywords that are common in nature and are used by a majority of searchers in popular search engines. This will invite new visitors to your websites as the ranking of your website increases to a great extent.

Related reading: Definitive guide to keyword research

2. Get Backlinks:

Another SEO technique to bring high quality traffic to your website is the use of backlinks. You can easily do this by submitting your articles on popular blogs or article directories. You can bring visitors of those popular article directories to your website with the help of backlinks. You must have to use post links or keyword text links to your site by using full URL.

Related Post: Useful Link Auditing Tips , How to analyze your backlinks & Best Practices for link building

Basically, META tags are keywords, titles, and description tags. You can optimize your website by ensuring every page of your website will have new set of keywords or title. With this, you must post high quality contents to your website, so that you can fetch regular visitors for your site. With proper use of META tags, you can increase views of your articles that ultimately improves ranking of your website.

4. Use of Social Media:

Proper and continuous use of Social media can provide several benefits to your website that improves ranking of your website in an indirect way. Through social media sources like Facebook, Twitter, Digg etc. you can expose your website in better way than any other sources. You can also generate awareness of any new content in your website with the help of social media.

Related reading: Social media optimization tips

Summary: SEO techniques are must for the success of any online business. You can use above SEO techniques to improve your website ranking.

10 SEO Factors That You Should Not Forget about!

Here are ten SEO factors that some people forget. Most of the time it is not even that they forget–it is the fact that they undervalue these factors so much that they leave them off their SEO efforts, or they leave them until later. These are factors which are the foundation of your SEO efforts and should not be ignored or left off of your pages. If you do then you are adding to the factors mounted against your SEO. Plus your competitors will not have forgotten about these SEO factors. Find below the top SEO tips.

The Title

Optimizing title tag is one of the most effective SEO tips. This is often forgotten, overlooked or underestimated. Just because it does not have an instant effect in the same way that backlinks do, does not mean that it is any less important or useful. The title is a large part of a search bot figuring out what your webpage is all about. It is also one of the most attractive elements that draws people in and has them read your webpage or blog post. Your title must be catchy, Well descriptive (Not too short, nor too long) it should contain a positive or negative sentiment, a power word and a number. Get list of 400 Power words here.400-Power-Words-ListDownload

Related reading: How to write the most effective SEO title tags

Keywords

Many people forget to put their keywords into the Meta tag. This is because they tell themselves that they will look up the best ones, but never get around to it. You should at least take a few keywords from your web pages text and put them on the keyword Meta tag (Though it’s not mandatory now a days because google takes keyword and overall meaning of page from your content with their advanced AI based algorithm). Still anything is better than nothing. If you are purposefully, putting keywords into your webpage (i.e. they are not appearing organically) then try to keep the keyword percent to 0.6- 1%.

Related reading: How to do keyword research effectively

Bold

There is an old trick of adding emphasis to your words in order to make them stand out. This can be done by putting them in bold (the most effective method) or by putting them in italics or in bullet points. How effective this still is–is unknown, but the technique should not be overlooked.

Meta Tags

The title tag is important, as are the Meta keywords and description. The keywords and the description are all part of making the search engine sees you, and making people click on the links to your site.

Keyword and content relevancy

It is foolish to put keywords into your Meta tag if they do not appear on the page. It is also foolish to put keywords into a piece of text where they do not belong. For example putting keywords about essay writing in articles about plumbing or it’s bad if you forcefully add keywords in your content. Keep things natural and consistent. Use long tail words and related phrases and synonyms as well.

Alt Text

For some reason the ALT text on an image is still an important SEO factor. It may be because the search engines have very little to work with when they index images, it may be because ALT text is sometimes more honest than image titles, or it may be because Google feel that ALT text adds to the user experience. No matter what the reason–ALT text is an important SEO factor.

Using H1, H2

Make sure that you put your keywords (preferably in your title) within H1 headers and atleast in one sub heading H2. They are a great way of pointing out your web pages meaning, title and keywords. It is a good way to strengthen your SEO.

Description

Put at least two of your keywords into your description and look up the number of words that Google prefers you to have in your description. Do not take the word of other websites. Look on the Google FAQ’s, forums and help sections for the most up to date advice. Make sure that you add a description within a Meta tag on every one of your pages.

Keywords in your links

Many people forget that they are supposed to put keywords in their links. Whether the links are pointing inwards or outwards, they should still have a keyword or two in them so that the search engines know what to expect when they reach the other end of the link.

Domain names and URLs

Putting your keywords into your domain name is sometimes impossible, but the URLs and page names are easy to put keywords into. All you need to do is name your page with something descriptive that has the keywords within. It is better than naming your pages page 1, 2, 3, etc, because that has no SEO value at all.

Page Speed

Focus on website loading speed or page speed optimization

How to Optimize your Website: Some More Tips

It is no secret that your website must be optimized to entertain search engines and win their favor. Other than creating websites with lighter images that upload faster on the user’s browser and also building up the SEO infrastructure, there are some points that you need to keep in mind.

First, write the title of your web pages with the utmost care. The title is the first piece of information that is read by the search engine crawlers. So, write a title that makes clever use of your primary keyword and is also eye-catching for the users.

Use of XML Sitemap with image location

Every website that needs to be viewed as serious contenders for higher SERPs must contain a sitemap. Sitemaps serve a dual role, just like the title of a web page. A HTML sitemap will guide users that are new to your website. Additionally, the XML sitemap will help in SEO purposes as well. Search engines will find it easier to index your website if you have a functional sitemap in place. Keep updating this sitemap as you go along editing your website by adding or removing web pages from your website. If you overhaul your website, take down the sitemap and install a fresh one.

What is the Importance of Sitemaps in SEO

Any reputed SEO agency would suggest many SEO tips and tricks to the website owners that help in getting better page ranking. But one of the underestimated aspects of a website is sitemaps. Site maps as is implied in the name are maps for your website. You can show the structure of the website, various sections within it, links in the site, etc. using the site maps.

Sitemaps make navigation through any website easy. It is a vital component both for the visitors and search engines. Site maps are efficient ways to communicate with the search engines. With the help of robots.txt you can tell the search engines which parts of the website should not be indexed, while through site map you can instruct the search engines where to go.

Site maps have always been an integral part of web design practices but with search engines tracking for site maps they have become important parts of SEO too. However if you are interested in reaping best benefits from site maps from the SEO aspects then a conventional site map will not do.

Google uses special formats (XML) to track the site maps which is different from the HTML sitemaps for human visitors. So, there is need for two site maps – one for the humans and the other for web spiders (or Google bots). It should be stated in this respect that two site maps are not seen as duplicate content by the search engines. In their terms and conditions Google has clearly stated that two site maps will not be penalised.

There are various benefits of site maps in the SEO campaigns. It makes a website easy to navigate and offers better visibility to the search engines. With the help of sitemaps you can inform the search engines about any changes to your site.

This does not mean your new pages will be indexed right away, but the changes will be indexed faster than websites not having a site map. When you submit a sitemap to the search engines you will not have to rely on the external links to bring spiders to your website.

If there are messy internal links at your website the site maps can help in this respect too. Site maps are of limited help in terms of broken internal links and orphaned pages which cannot be reached by other ways. With the help of site maps you can classify content of any website. Search engines do not classify information according to keywords and information unless they are told to do so by the site maps.

If you have a new website and there are large number of new pages, then site maps are a must have. Though in the present times websites can still exist without sitemaps but soon it will be compulsory to submit sitemaps of a site to search engines. Spiders have and will continue to crawl through the web looking for fresh pages, but the popularity of site maps is bound to increase in the future.

Use of Google Analytics Effectively

You will not be able to fine-tune the SEO aspect of your website unless you have Google Analytics installed. Modern SEO is all about trying new strategies to get ahead of the competition curve. Now, to work out what works for your website and what falls flat, you need to test the changes you are making. Google Analytics will help you understand what these modifications are doing for your website. If the statistics do not encourage your changes, it is time to think along new lines. That is why installing Google Analytics on your web pages is a must.

Keep investing time & keep patience in SEO

SEO is not done in a week or even a month. It takes time for your website to reap the benefits of the changes that you make to make it more SEO-friendly. You need to be patient and keep investing time and energy in checking that your execution leaves nothing to the imagination. At the same time, your web designers and writers must fully comprehend what your objectives are for your website when it comes to SEO goals. You must all be on the same page in order to tame the search engine results and pull them in your direction.

WordPress SEO Tips

WordPress SEO tips are provided keeping in mind the latest penguin-panda updates. Please find below some of the WordPress SEO suggestions

WordPress is one of the most popular blogging system covering more than 60 million websites worldwide. It is basically a free and a open source blogging tool & content management system (CMS) based on PHP & MySQL. The main focus would be to promote the blogs more & more. Unless you attract more readers & commentators to your blogs, your write up won’t get promoted. Here in this article we would discuss some easy SEO steps to promote your wordpress site. The WordPress SEO tips are provided keeping in mind the latest penguin–panda updates. Please find below some of the WordPress SEO suggestions:

Permalink Structure:

Try to keep the permalink as simple & short as possible (%postname%), so that it becomes easier for the visitors to understand from the permalink as to what the page is about. You can also insert a single focus keyword to your permalink.

Steps to insert permalink

- Move to WP dashboard

- Click on settings

- And then select the “permalinks”

How to Use Permalinks to Optimize WordPress

WordPress is the most popular content management system on the planet. This is partly because its popularity fed its own PR campaign, and partly because its themes/templates may be highly search engine optimized. Whatever the reason that you have decided to use the CMS, you are able to use a thing called permalinks to optimize your WordPress blog.

What are permalinks?

They are another name for your URLs. When you buy a website domain, you buy something such as www.petsparkcars.com, and this is your home page. Your URLs are all of the pages that come off of that page, so you may have www.petsparkcars.com/cat-parking-a-mini-metro. That is a URL, and that name is what is known as your permalink in WordPress.

Controlling the structure of your permalinks

You do have full control over your permalinks with WordPress. There is a section called, “Permalink Settings” which shows you a number of options. These options are mostly the WordPress common settings, with the final one being the custom structure. You may control your structure by either clicking on one of the custom setting options (they are radio buttons), or by typing a custom structure setting in.

Your options will appear on the screen as:

- Default

- Day and Name

- Month and Name

- Numeric

- Post name

- Custom Structure

How the permalinks are set up

They start with a general format, which means starting with your home page. It then adds in your post specific content. The reason that it puts in months and days, or sets them as numbers, is because WordPress is built to be a blog. As a result, this means that any content that is old is less valuable. It gives numeric iteration values or dates in order to show which posts are the newest. Where this works very well as a blog function, it is not very search engine friendly.

Setting your permalinks to be more search engine friendly

WordPress is known for its SEO (Search Engine Optimization) properties. You may make your WordPress blog more search engine friendly by using the custom structure within the permalinks section.

In the custom structure section, delete anything that is in the panel already, click its radio button, and enter this into the blank panel:

/%postname%/Doing this is going to set your URL default to the most search engine friendly setting. It will just show your domain name and your blog post name/title. This is very search engine friendly, so long as your blog post titles are also search engine friendly. For example, if your blog post title is “1001” or “App!! Stuff” then it is not going to be very search engine friendly. If it is something like the example given earlier, such as “cat parking a mini metro”, then your permalink is going to be search engine friendly:

www.petsparkcars.com/cat-parking-a-mini-metro

This is because the URL is descriptive, so that the reader can figure out what the page is all about, and so can the reader.

It is sometimes possible to edit a URL after the effect

This is done by going into a post to edit it. Next to your permalink there may be a button that says “edit”. It is the same button that is available whenever you create a new blog post. It is there so that you can alter the permalink (URL) after the WordPress program has already auto-generated it. If you have set your WordPress to the custom settings shown above, then you do not need to do this. But, some people feel the need to go back and change old URLs.

Should you go back and change old URLs?

If your version of WordPress allows you to, then you may go back and change URLs. Or, you may have to delete the post and reinsert it (not an easy job and may confuse your post order). But, should you go back and change your old URLs? It is really up to you, but in my opinion, you should not!

It is going to confuse your blog post order if you remove blog posts and add in new ones with new permalinks. It is also going to create broken links, as people may have back linked to your blog post in the past. Changing URLs causes more problems than it solves.

Plus, your future posts are going to be search engine friendly, so you should take that as a win and run with it. Changing your old posts is not going to attract enough attention to warrant the time you are going to spend on it. The solution is to put 301 redirects even if you change the url. Rank Math or Yoast SEO premium wordpress plugin has this auto redirection feature.

SEO Post Title:

Try to use attractive headlines to your post (70 characters). It helps the visitors to get attracted to your articles easily. Always use the key phrases in the title & accordingly modify the title. This is one of the best practices for WordPress SEO that earns good results.

SEO Meta Description:

is a brief write up of maximum 160 characters. It actually helps the visitors to know exactly what the particular page or post is all about. Although, meta description is not considered to be the ranking factor, but can affect your conversion rates. The meta description gives a vivid idea to the searcher about a given page of what they are looking for.

Image Optimization:

It takes hardly few minutes to optimize your images. Add alt tag to your images. This is very easy, just copy & paste the focus targeted keywords. Actually google does not have eyes to gaze your images, it can only understand what is there in the html source. Therefore, try to insert an alternative text to each of the images.

You can use a plugin All in one SEO or Yoast WordPress SEO or RankMatch for better WordPress SEO.

Quality & well researched SEO content:

is a most popular way to promote your posts. Ideal quality content should be free from any kind of spelling errors, bulk keywords stuffing & grammatical errors. Try to be informative & interactive with your write ups. Link some of the keywords with the other authority sites. This in turn would help to earn authenticity to your post. Use H2 and H3 for headlines also, with related keywords as your subheadings.

The discussed points are some of the key factors that judge the quality of your sites & posts. Apart from all these, there are various other parameters that make your pages SEO friendly, like – pageload time factor, social media integration, internal linking etc.

Nowadays, wordpress is one of the most popular global platforms. Almost all the leading corporate manages their website in wordpress. Just in one word “google loves wordpress”.

Related Reading: Top 5 On Page SEO Tricks & Top SEO Myths

Ecommerce SEO Tips – Playing with Content

Fundamental eCommerce SEO tips and infographics. Learn how to play with content for your eCommerce site for better search engine optimization and promotion.

As a marketer, we all know the importance of search engine optimization for a business website. Though there are many best-practices, it is no way an easy task. And the challenges are more when it comes to optimizing e-commerce sites.

It is utmost important for an e-commerce site to get higher rank in search engine results to get more visitors. The following are some content writing tips to make your online store visible on the top search results:

Content is the king – Yes! For e-commerce sites as well content or rather unique content plays the crucial role. Every product and category pages need unique content, if you want your online store to stand apart. Google or any other search engines value interesting and unique content, when it comes to determine the value or relevancy of the site, for higher ranking. In fact, Google’s Farmer/Panda, launched in early 2011, favors sites that have original content.

Well-written content will also help to persuade the visitors to become a buyer; thus, improving your sales. So, the next time you are adding a product description, write your own description, which can be more like a review or focus on its advantages rather than bluntly copying its features or the manufacturer’s description.

Duplicate content can be another issue with e-commerce sites. However, duplicate content issues work on a different note for the online stores. To make these sites user friendly, the e-commerce sites allow the visitors to sort product lists by numerous parameters including price, product rating or popularity.

Though such features make it easier for your visitors, it can create major challenges for SEO. By sorting products you are actually creating multiple pages that have same content; as far as the search engines are concerned, you are duplicating content – a big no-no for search engine optimizers. Search engines never consider these pages as valuable, which will weaken all your SEO initiatives.

Though it will take a lot of time to fix this by proper Robots.txt file, you can at the most use Webmaster Tools to tell Google about the sorting. In addition, create category pages for broad keyword phrases that your visitors are likely to use. Also, add product-specific pages within each and every category to boost your SEO initiatives with proper and interesting content.

Apart from attracting Google and other search engines, these category pages with unique content based on broad keywords will help the visitors to find the information they are looking for quickly, which in turn, are most likely to turn them into buyers.

Related reading: How to write killer eCommerce content

5 tips for optimizing an eCommerce product detail page [Infographics]

Source: Elliance

How to Know a Good SEO Strategy at a Glance!

Let’s face it. There are numerous sites online all fully concentrating on SEO strategies. You land on a particular blog, they’ll tell you “building link using blog commenting doesn’t work anymore” – you believe them immediately and stop using it. Shortly, you land on another random SEO blog and they tell you, “Link building using blog commenting drove our blog to a Page rank of 6” – you become confused all of a sudden.

As if that’s not enough . . . You still see yet another site telling you of another strategy which hopefully everyone will go crazy implementing over the coming months – like it’s the best thing since sliced bread.

But really, what’s my point?

Well, it’s quite simple. I’m not actually saying you shouldn’t rely a little on what those gurus tell you about recent SEO tactics that are working. That’s not what I’m trying to convey. What I mean is this – you should at least have a way to know, or better put it this way – a means to calculate whether the idea is a “born today and end in trash can tomorrow” or “born today and sky-rocket forevermore kind of SEO strategy” – now that’s what I mean.

Once you get that balance of being able to detect when a strategy is lame and won’t last long, then you’ve single-handedly found out the different between a GOOD strategy and a BAD one.

So like I’m assuming presently, you want to know how to spot a good strategy and a bad strategy just from a glance?

To know this, follow this simple bullet points below –

A good SEO Strategy – Mustn’t be Hard to Rank. . .

It is that simple. When you have a supposedly good idea of a strategy, then it would normally surpass any algorithm cluster and rank your blog quickly when the “right buttons” are pressed. But when it’s a bad strategy, no matter how much time you spend doing and pressing the “right buttons”, it won’t just seem to answer to you.

For Example-

Driving enough backlinks to “anchor text” which runs straight to your blog will surely get you ranking in a very good position for that anchor text in no time. That’s to show that the anchor text SEO strategy is a good practice in this modern time.

And also, as a good SEO strategy, the more you increase the number of links to that anchor text, the faster you climb the search engine rankings till you get to the first page of Google for that specific text.

That’s how Pat Flynn got his “SecurityGuideTrainingHq” (http://www.securityguardtraininghq.com) website to the top of Google search within a short period of 2month for a keyword that had a competition of 5,600 global searches per month. And all he did was literally to drive traffic to his chosen anchor text “security guide” which he now tops Google first page for the query.

So you see the trends? If anchor text as an SEO strategy doesn’t waste time in ranking and showing you result for your hard work, then it’s a good SEO strategy. That’s the opposite of a bad SEO tactics. If it’s a bad one, you’d rank at the bottom for like ever.

A Good SEO Strategy – Popularity Doesn’t Die Easily. . .

We know how this goes. You hear of a popular and new tactics which is bringing in more traffic than anything you’ve ever imagined. Quickly, you rush out to also implement it, only to find that it isn’t really working any longer.

Have you ever being in that kind of situation before? Which left you feeling helpless and made you look as if you arrived late to the party?

Yeah, I have too. But just like I said you can find out whether that SEO strategy will last or not.

And how exactly do you do find out?

Simple! By using Google Trends (http://www.google.com/trends) to check for the popularity of that strategy in recent time. If the popularity has been going down since the previous years to this present year, then that means people aren’t applying it again and they don’t talk much about it like they used to before because it doesn’t work anymore.

That alone should be a negative pointer for you to use in marking any SEO tactics along that line as a bad strategy.

Like I said, a bad strategy will dwindle in popularity while a good strategy will surely grow upwards in search popularity and implementations by follow bloggers.

For Example:

Let’s take the “blog commenting” SEO strategy. Since when it came out till now; it has and is still growing from strength to strength. Countless and diverse amount of people have given positive feedbacks about how they got high Page Ranks just by applying this one good SEO strategy to their blogs.

So when others heard about such good feedbacks that people were getting just by commenting on high quality blogs on a frequent pace, they also applied the strategy and also got the same good results – high Page Rank!

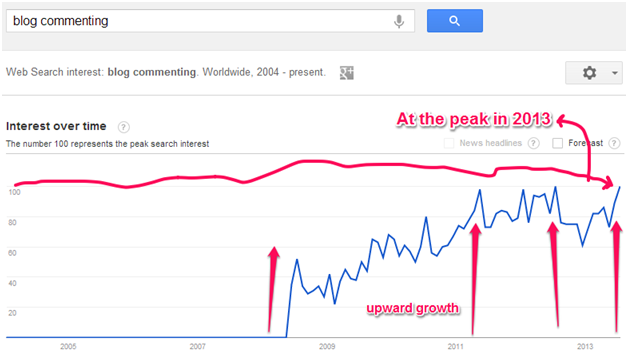

And of course, others heard and also applied it – the same thing happened – a spiral momentum was initiated right at that moment; people were applying and re-applying it over and over again. Just looking at this graph below (gotten from Google Trends), there was an insane application of this one strategy starting from 2008 to 2013.

If you look closely enough, you’d notice that the blog commenting strategy became so effective in 2012, that in 2013 – it is at the peak right now! (This obviously stands at 100, as measured by using the graph).

So you see? A good strategy gets more popular when more and more people try to implement it because it’s actually working. If it isn’t working, people won’t spend or waste money trying to implement it. And that will cause it to lose popularity at once.

You’re still not totally convinced?

Ok. Let’s take yet another example. . .

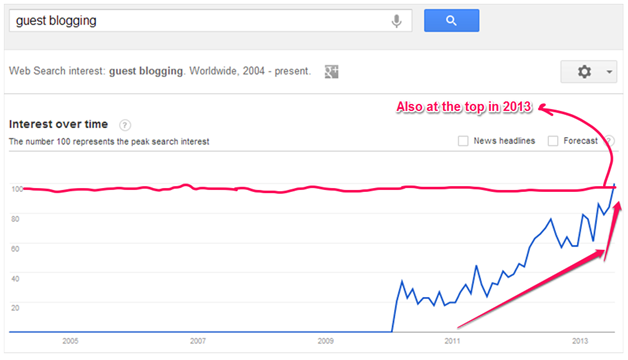

This time, it’s from one of the oldest and agile SEO strategies out there on the web. We all know what guest blogging is all about. And just like “blog commenting”, it’s will also fetch you high Page Rank and inclusively get you quality traffic too.

So like “blog commenting”, when people started implementing it, good results started coming in a rather unusual manner; because unlike the blog commenting, the traffic which guest blogging was sending became really enormous. Glen from Viperchill.com said he got up to 3,000+ traffic just from one guest post submitted to lifehacker.org; same thing with Bamidele Onibalusi from Writersinchare.com, formerly younprepro.com, who also got 2,000+ from one guest post submitted to problogger.net.

So what happened next? These gurus above and other gurus like them started telling people that guest blogging was the main traffic system then, and like expected, their readers took it upon themselves and tried guest posting on other blogs too.

They got same result and also told their own readers and that initiated a spiral momentum of implementations from other bloggers. This of course helped sky-rocket guest blogging to the top as a top form SEO strategy which has come to stay.

So next time you think of accepting of considering an SEO strategy and going ahead to apply it. You should first look up the strategy up on Google Trends first to see how popular it is at the moment. Doing this will for sure save you a lot of money which you might have ended up throwing away on “rise today and end in trash bin tomorrow” kind of SEO tactics. So be wise.

Make sure to be able to look at an SEO strategy at a glance and figure out whether it’s here to stay or not. Look out for past dwindling of the tactics and also look at for how hard it takes to rank for sites using the tactics, before you can trust it enough to implement it.

Do you have any good strategic way in which you know which strategy is bad and which is good? Let us know in the comments below.

Some Irritating SEO Problems and their Solutions

SEO is a demanding field of work. It taxes professionals because there are no immediate tangible results. The core SEO job is very organic and time-oriented. What you do today may bring you results sometime next week.

This lack of instant gratification makes professionals edgy because most times they do not know if they did the right thing. Waiting for the results of their task can be quite daunting. These challenges apart, there are many grouses that SEO professionals have to contend with. In this post, I will outline some of the most irritating problems that I have faced in my career as a digital marketing professional who specializes in SEO.

SEO Problems – #1: What Affects Rankings? Seriously?

Whenever you check out SEO guidelines, tips, tricks, whatever, you come across all these best practices. Many do not even know what it means, but they keep using it in their conversations! Anyway, best practices are ideal methods that you should follow. These include avoiding manipulative web links, spam links, building light websites, proper keyword usage and strategies, etc.

There is a long list. The problem is that despite following each of these practices to the T, websites fail to make a mark on the SERP scene. Result: SEO professionals get frustrated and angry! To add to their anger, they find many websites not being diligent to the best practices and yet getting away with higher SERP clout. The question, therefore, is, what actually affects SERP rankings? I mean, seriously!

SEO Problems – #2: Why SERPs Fluctuate Unreasonably?

This is the second most irritating grouse for me. I follow every rule in the book, improvise judiciously, exercise caution and grab some favorable SERP slots. What do I find in a couple of days? I have lost them to a competitor, someone who’s website analysis revealed a much weaker SEO mechanism in my research.

It took me some time to accept the fact that Google has its own way of working. I make some changes in the website, Google does the same in their algorithms. Not every update is splashed all over the online media channels. My competitor is also doing tweaks internally. Taking all these variables into consideration, they have edged me out and nosed ahead. Instead of seeing red, you have to keep up your analysis and testing.

SEO Problems – #3: My Competitors are Spamming and Still Ranking!

When I do my research and find that my competitors are using spam and manipulative links and still hitting high SERPs, I flip my top! Is Google on a nap? Why can’t they spot what I can? Undoubtedly, these thoughts are bound to cross your mind as a SEO professional, no matter at what stage of your career you are, experienced or otherwise!

There is a bit of understanding required here. Spam links and their ilk can come from any quarter. It may not always be plugged by the competitors themselves. A quick look at your own backyard and front-yard may reveal some spam links from your website as well! The point is that Google knows it too. It is not holding the competitor’s website responsible for these links that have cropped in from somewhere. And that is why there is no penalty in their SERP ranks.

SEO Problems – #4: Google is Partial towards Big Brands

This is one grouse that I felt is true very strongly, much like other SEO professionals that I know. It seems that no matter what you do, big brands will always go ahead of the competition curve. If you are competing against a giant in your domain, you are unlikely to win on an open battle, not if Google is the referee! With experience, I have learned two things: one, big brands have a strong offline presence and that rubs off a lot online.

People click on their links because they recognize the brand name. Google is bound to offer what users want to click on! You cannot hold them at fault there. Two, to beat big brands in the SEO arena, you have to do things differently. For example, big brands are bound to have a vice-like grip on the generic keywords in your domain. You may find it tough to grab SERP spots there.

What you can do is look at the long-tailed keywords. These are not targeted by big brands because they deem it beneath their interest! Pick those up and spread out your influence. Also, find out topics in your domain that big brands never talk about, for whatever reasons. Highlight them and write about them. That will get you attention from a different group of consumers.

The Final Word

Google is the bedrock of SEO, as far as practicable. You have to work in it, not without. SEO professionals will do better to understand, accept and accommodate its whimsicalities, if it can be called so! The job is to always look to find a way around it, not abandon it out of irritation.

Solving Technical SEO Problems

Solving technical SEO problems are pretty much time consuming.Here in this article we will see the common technical SEO problems and their viable solutions

Solving technical SEO is often frustrating, especially when you got to solve the problem of the same site over and over again. And I guess I’m not alone; there are several websites suffering from this issue. In this post I have tried to outline some common problems I have come across with viable solutions.

Issue # 1: Uppercase URL vs. Lowercase URL

The .NET websites are the most common victim of this problem. The server is configured in such a way that it will respond to uppercase URLs without redirecting them (nor you have to rewrite) to the lowercase URLs. However, the search engines now have got better at choosing such canonical version as well as at ignoring the duplicates.

This solved the problem up to an extent, though there are many examples where search engines failed to do this task properly. Thus, it is still essential to make it explicit rather than relying on the search engines for figuring it out themselves.

Solution: Take help of the URL rewrite module to solve this issue on IIS 7 servers. This tool comes with an option within its interface allowing the user to enforce lowercase URLs. Once you do this, the tool will add a rule to the web config file, solving the problem.

Issue # 2: Homepage’s Multiple Versions

This too is more common with .NET websites, but also happens on other platforms. While auditing your site, check if there is any duplicate version of your home page. To check it simply type www.yourwebsite.com/ default.aspx OR www.yourwebsite.com/ index.html OR www.yourwebsite.com/home

The search engines can find this duplicate of your homepage via XML sitemaps or navigation. You can easily solve this problem without getting into much details of how these pages are generated.

Solution:

A solution to this problem often depends on a guessing game, as different platforms generate different URL structures making it difficult to find these pages. The best solution is to do a crawl of your website and explore it into a CSV. Now, filter the crawl by the META title column to search your homepage title. This way you can easily find the duplicates pages of your website’s homepage. In addition, you can add a 301 redirect to these duplicate pages pointing to your correct page. I have also seen many solving this problem with rel=canonical tag.

Many use tools like Screaming Frog to crawl the site for finding internal links to this duplicate page. This is a good idea to go in and edit these duplicate pages directly so that they point to the correct URL. This helps to avoid losing link equity which is a common issue when internal links are going via a 301.

Issue # 3: Soft 404 errors

A very common problem with websites, though users will hardly notice the difference. Unfortunately, search engines crawlers will know it. A soft 404 page looks like a normal 404 error page but it returns the HTTP status code 200.Though the user will see some more text apart from the “Page not found” line, the code 200 tells the search engines that the page is working properly. As a result, search engines will crawl and index even those pages which you would rather like to hide from the crawlers.

But behind the scenes, a code 200 is telling search engines that the page is working correctly. This disconnect can cause problems with pages being crawled and indexed when you do not want them to be.

A soft 404 also means you cannot spot real broken pages and identify areas of your website where users are receiving a bad experience. From a link building perspective (I had to mention it somewhere!), neither solution is a good option. You may have incoming links to broken URLs, but the links will be hard to track down and redirect to the correct page.

How to solve:

Fortunately, this is a relatively simply fix for a developer who can set the page to return a 404 status code instead of a 200. Whilst you’re there, you can have some fun and make a cool 404 page for your user’s enjoyment.

To find soft 404s, you can use the feature in Google Webmaster Tools which will tell you about the ones Google has detected:

You can also perform a manual check by going to a broken URL on your site (such as www.example.com/5435fdfdfd) and seeing what status code you get. A tool I really like for checking the status code is Web Sniffer, or you can use the Ayima tool if you use Google Chrome.

302 redirects instead of 301 redirects

Again, this is an easy redirect for developers to get wrong because, from a user’s perspective, they can’t tell the difference. However, the search engines treat these redirects very differently. Just to recap, a 301 redirect is permanent and the search engines will treat it as such; they’ll pass link equity across to the new page. A 302 redirect is a temporary redirect and the search engines will not pass link equity because they expect the original page to come back at some point.

How to solve:

To find 302 redirected URLs, I recommend using a deep crawler such as Screaming Frog or the IIS SEO Toolkit. You can then filter by 302s and check to see if they should really be 302s, or if they should be 301s instead.

To fix the problem, you will need to ask your developers to change the rule so that a 301 redirect is used rather than a 302 redirect.

New-age SEO: More about Topics, Not Keywords

The way people search will change, now that Google is looking at this whole scenario in a new light. Keywords, the mainstay of SEO, will not be the defining building blocks in this new scheme of things.

Rather than keywords, searchers will get results according to the intention of the question than they ask, instead of the exact words that they use in the search.

This kind of thinking will take the steam out of the keyword issue and focus it more on topic-based content. A good idea to do that is to conduct brainstorming sessions for content ideas and topics instead of relying entirely on SEO executives to give you keywords to write on.

This novel initiative of Google means that the over-insistence on keywords is set to end. Many SEO companies have damaged their online marketing efforts because of their reliance on keywords and data based on keyword research. It also ends the supremacy of the SEO department over content writers.

It is really frustrating when well-written content pages are ticked off because they don’t adhere to the right keyword density or some similar pre-requisite. The significance on keywords and their use in content has also compromised the quality of writing to a great extent. If you look at those rare web pages of readable content, you will find that all of them laid stress on material and writing, rather than on keyword parameters.

Other sins revolving around keywords, like anchor texts and their abuse, will also take a fair amount of beating. Websites that have built up their strategies for online marketing by relying on keywords will have to rework their plans if they want to fit it. New plans will involve serious thinking about the content that they publish, along with keeping an eye on what kind of topics interest online readers in their domain of work.

Use the social media to find out what topics are trending in your line of business and come up with content that validates the tastes and preferences of online readers. Google has injected a very personal touch in the SEO domain by initiating this kind of searches. It is passionate topics that matter, not sterile keywords.

Need Guaranteed SEO Results? Not Happening!

Are you an internet marketer looking for guaranteed SEO results from your team? Then you’re barking up the wrong tree! There is nothing called guaranteed or assured results in the world of search engine optimization. Any SEO team offering you that kind of a deal is actually taking you on a ride that you will eventually fall off!

There are various reasons why no one can guarantee what results you’re going to get in SEO through your efforts. What you can get, however, is a fair amount of idea by studying the trends.

The reasons why SEO gives you no guarantees in ranking.

Reason one, the secrets of the search engine algorithms are exactly that: secrets!

What’s worse, these secrets keep changing regularly! Search engine giant Google has made some of these secrets public knowledge in the recent times, but most of it is still under the carpet. You have to figure out the behavior of these algorithms as you work along.

You also have to keep in mind that modifications and tweaks are made to the SEO algorithms every now and then, without any formal announcements. So, to keep pace, you have to constantly test your SEO methods to see if they are still getting you results!

Reason two, the searches made by online users are primarily personal experiences. Search engines prompt users towards keywords and results according to their previous searches recorded in the browser history. For example, a person signed in on Google will results different from someone without a Google account at all. This is because when you’re signed in, Google records what you’re searching for and offers you search results depending on this recorded history.

Reason three, websites differ from each other even if they are in the same domain of work, much like two persons belonging to the same family and household. What works for one may or may not work for another. This fact holds true for web content, designing as well as keywords. You cannot replicate the success of one website by imitating it for another site’s SEO.

Ranking and online traffic depends on how old the domain is and how trustworthy it is among online users. You have to take that into account as well while drawing up the SEO plan. So, the next time someone offers you guaranteed results on SEO, you know better!

The New SEO for the New Google

Google has changed its ways in the last couple of years, especially with their updates like Penguin and Panda. SEO companies know every inch of review written about the new face of Google. However, despite their knowledge updates, there are many SEO teams which continue dish out the old SEO formats for brands and businesses! There is little change in how they conduct their SEO or how they bring in online visitors to their websites, or those of their clients. As a result, they are facing a threat of severe penalty, if not online oblivion.

Make no mistake! This is no rant against SEO companies or teams! I just want to highlight an important fact that has scratched itself to the surface of the internet: the emerging and evolving face of SEO. Google, the primary search engine on the internet, and the deciding authority on almost everything related to SEO, has changed its plan.

It is no longer about content farming, link buying or similar other evils that SEO companies could indulge in and get away with! The modus operandi has changed for the better and every self-respecting SEO team has fixed their old ways.

During the time of these updates rolling out from the stable of Google, SEO professionals, including myself, felt a little baffled and hassled as to why Google was making these changes when we had already perfected the art of knowing what SEO is all about.

Months down the line, I share the optimism of SEO experts across the globe that no matter how many hate mails you direct at Matt Cutts, Google has turned a page in the right direction. It is true that along with the negatives, some positives were also pushed out of the frame. But that is myopic thinking on our part!

For every epoch making event, there will be detractors and small prices that we all have to pay. With Google’s new face, the crusade against spam has made work tougher for SEO teams. Generally speaking, it is only the lazy SEO units that don’t want to raise their game to the next level that are still complaining about Google’s updates. This is the new Google and you better get that new SEO plan ready!

Let me know what you think of this new face, and functioning, of Google.

The New Dimensions of SEO – Search Experience Optimization

Matt Cutts of Google elaborated SEO as Search Experience Optimization and not search engine optimization, as we know it. There is a lot of meaning and significance in this terminology. It defines SEO as we know it and also the concept of keywords.

With the Hummingbird update of Google, where the previous algorithms were morphed into something new and more user-oriented, the world of SEO is about to change for the better. The conventional thinking pivoted around keywords and building up the SEO framework on these little building blocks called keywords is going to change in a subtle, but definite way. Let’s explore the new dimensions of SEO!

To begin with the coinage of Matt Cutts,

SEO is going to be more about the user experience than about actual keywords. The intention of the user is going to be of more value than the actual keywords typed into the search box.

Let us consider an example here. Suppose you search in with “Who won the US Open Men’s Single this year?” Google will throw up an answer for you, say X. Now, search with “Whom did he beat in the finals?” The answer here will be Y. That’s where the point lies and the difference between conventional and new-age SEO is all about. Let me explain.

In the second search, you are not mentioning US Open Men’s Final or the year or the name of the winner, so how does Google come up with the right answer? It is because Google is doing away with just search according to keywords, which in the second case will show up names matching ‘finals’ ‘beat’, irrespective of the sport, tournament or year!

Instead, Google draws upon the result of the last search, grabs the intention of the searcher and throws up a likewise result. It does not call upon irrelevant searches as it would have if it just went by the keywords in the search box. That is what Matt Cutts meant by search experience. In this new SEO, the perspective of the online users is paid more attention in throwing up search results.

That faster SEO teams start thinking on these lines, the better they will be at tackling the new dimensions of SEO!

Top 3 SEO Topics You Should Never Blog About

There is no doubt in the fact that as a blogger writing about SEO you are tempted to share any tip or advise that comes down to you. There is nothing wrong in that. In fact, it shows how serious you are about your commitment to the readers of your blog.

However there are some topics in SEO that you will do better never to blog about. Writing or sharing your advice on these topics will prove to be a regressive step and there will be those embarrassing moments when you have to put your foot in the mouth! What are these dreaded SEO topics that you must avoid at all costs? Let’s find out!

Much of the success or the lack of it of your blog is because of the number of readers who come in to read your content. If you have devised a method to get more visitors to your blog by sending out emails, do not make this information available on your blog.

Your readers, some of them are sure to be bloggers themselves, are bound to carry off your email templates and replicate the formula. In your bid to impress your readers with a path-breaking method, you will squander away the proverbial gander!

SEO Conversion Secrets:

So you have a special formula to squeeze out the SEO juice out of search engines and enrich your website or blog? Keep it with you! Instead of giving out your trade secrets and spoon-feeding your readers on how to do their job, offer honest opinions and let them figure it out for themselves.

You can analyze the different tools of SEO and tell them honestly what you think of each of them, but do not let them in on what you do specifically for your own site.

Discourage Unethical Competitor Analysis:

Everyone in the SEO business is in it for some gain. It does little help if you offer tips to unethically stalk others’ blogs and websites for competitive keywords in your domain of work. You can advise to invest time in experimenting with your own website and keyword data. Also, look for data about your competitors that is already out. That way you can get more insights without opening closed, private doors.

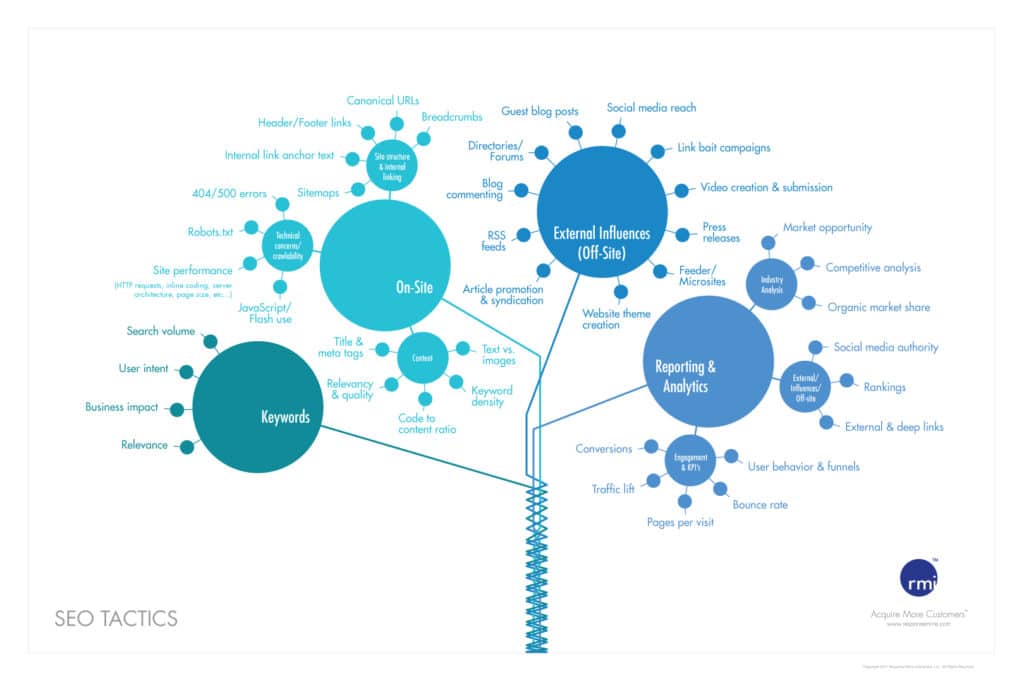

Top 10 off-page SEO techniques

Before discussing the top off-page techniques for this year, we will discuss what is SEO? In layman’s terms Search Engine Optimization (SEO) is a technique or process that increases the visibility of a website in a search engine. There are two important key factors – On-page SEO and Off-page SEO. Both are important for SEO.

So, SEO = Off-page SEO + On-page SEO

Though, Off-page SEO and On-page SEO both are important for increasing the visibility of a website but here we will discuss the latest trend of off-page SEO technique .

What is Off-page SEO ?

Off-page SEO is the technique or process that promotes your website beyond the website design. In another word, Off-page is the only thing you can do off site to increase your rankings by building up more backlinks. More links will generally lead to better Google PageRank and better search engine rankings.

In 2013, we know that Google changed its algorithm. So, now the question is, according to current Google Panda & Penguin update what should be the right off page activity to get better rank? Here, I will discuss some important off page SEO techniques which can help you to increase your website visibility and to get higher page rank:

Social Networking :

Social networking or social media marketing is the most essential element for Branding and as well as for online reputation management. Google search algorithm also counts social signals as one of their ranking factors. You need to join in the most popular social networking sites like – Facebook, Twitter, LinkedIn, Google+ etc & use them regular effectively.

By utilizing these social networking sites you can build an online community and engage your audience in a better way. If your site is good enough (Good information, Quality content, Good layout & more) other webmasters shall start giving link to your website.

Guest Blogging: –

Blogging is one of the best way to promote your website online. Submit your Blog to different good Blog directories, Forum and Article marketing sites. Remember the blog or article should be unique, informative and interesting. You can use these types of words to your blog title like – Top Lists…., How to….., best list of… etc. because visitors like this type of contents.

It will help to increase your authorship visibility in the web and other websites might link to your website’s content, which in turn helps search engine to crawl your website more frequently and that will help to get high rank in search result. But be careful about doing only high quality guest blogging and within limit.

Blog Marketing / Blog Commenting : –

Blog commenting is another good way to promote your website/blogs. Now, do blog commenting on various blogs related to your niche, where you can put your links in the comments section. Remember not to spam, use it wisely. Put your comments both on dofollow & nofollow blogs.

Social Bookmarking :

Social bookmarking is the smart way to promote your website. But for social bookmarking, you need to deal with only good, high PR, effective bookmarking sites, like Digg, Delicious, Reddit, Stumbleupon etc. When you publish a new article or blog, you can share them on these sites. Do not bookmark on poor quality pligg or scrap sites.

Photo and Video Sharing : –

“A picture can say thousand words.” It shows that graphical things like photos, videos, infographics are more attractive to online visitors. Now it has becomes one of the popular SEO strategies. You can share your photos or images on major popular photo sharing websites like Flickr, Picasa, Photo Bucket, Picli, Pinterest etc.

As well as you can also share or publish your video, tutorials or any other related videos on the top video sharing sites like YouTube, Vimeo, etc.

Link Baiting : –

It is the another great way to promote your site. Link Bait is a piece of content on a website, may be it is an article, blog post, picture, or any other section of cyberspace with other website links for the purpose of tempting other web pages to link to that site. Matt Cutts defines link bait as anything “interesting enough to catch people’s attention.”

Press Release:-

Though Google has announced thatpress release will not be providing the backlinks to your site but press release is a very good process to generate good traffic and brand value for your website. 1888pressrelease, Open PR, and PR Leapare three websites where you can submit your press release article. PRnewswire is another good paid press release site.

Article Directories & Web 2.0 Properties : –

Google loves latest, unique and good content. So, if you submit good blog or articles to different good high PR websites like Ezine, Go Article, Zerys or even set up an account on Yahoo Voices, it will boost your website’s popularity and rank also.

On the other way also, you can submit your article (useful and unique content) to some good web 2.0 sites like Squidoo Lensesand HubPageetc. But remember don’t submit same article or blog on all web 2.0 sites. Don’t spin or use automated softwares. You have to need unique article for every web 2.0 sites because if you submit same article on all web 2.0, Google count just 1 backlink for your website or might not count any link.

Question-Answer :

Try to join some question-answer related websites like Quora, Yahoo Answers etc. Participate in the questions related to your subject and this can be a great way to increase your link popularity.

Business directories and Local listing :

Enlist your business website to related business directories and local listing websites for SEO benefits. By creating an account in these directories post some good, unique, quality information about your service which will help to optimize your site and to provide good quality backlinks.

Final note:

Focus on quality links, blend strategy for tier 1, tier 2, tier 3 links, rotate anchor texts (exact anchor, related keywords, naked link, brand name link, “click here” links), bring variety to link profiles (articles, profiles, forums, PR, social, directories, wikis, answers, guest blogs, comments, debates, reviews & more)

According to my point of view, these are some tips or suggestions about latest off-page SEO technique. I hope if you will follow these strategies , it can help you to build up your SEO rankings.

Related Article: Most Common & Deadly SEO Mistakes

7 Bang-on Measures to improve SEO

SEO is evolving everyday and along with that all the procedures of getting good SEO positions for the websites are getting changed. Unlike a few years ago, the Penguin and Panda updates of Google have helped the users getting a better experience of using search engine. The target is to increase relevancy of search results and to eliminate spam as much as possible.

We have a few suggestions for you to do that. With a few tips, you get to improve your SEO experience.

1. Unique Text

The best thing you can think of to rank your page higher is unique and good quality content. Duplicate content is a strict no no while unique contents increase the value of what you are willing to say. This has two-fold benefits viz. attracting more readers and getting good SERPs.

2. Anchor Text Diversification

If you diversify both the internal and external anchor texts, it will be easier to avoid being flagged as spam. Different variants of the same keyword, descriptive text can make the difference.

3. Link Building has Changed

Keep changing the link building procedures from time to time. This produces backlinks naturally. You can focus on guest posts but, you should also explore other link building methods for better quality links.

4. Diversify Traffic Sources

Google is the top searcher till date. But, the frequent algorithm change can damage your site at times. So, depend on a number of different sources. Diversifying your traffic sources can protect you in future and reduce the risk of being wiped out.

6. Videos

Believe it or not, an interesting video can make the difference. Videos are often searched for and viewed by people who seldom read text contents. It has the capacity to bring referral traffic too.

7. Keep Mobile Users in Mind

Smart phones and tablets have already make experts think that internet search through mobile will surpass . So, the websites surely need to be optimized for mobile usage. A responsive design can make the process easier for mobile users. After all, you cannot afford to lose potential traffic on mobile.

Why you need to improve the SEO of your website?

Well the reason that you want to improve search engine optimization of your website is simply because you want your website to pop up as soon as someone types something into google related to your website. This doesn’t happen most of the time, with most people who have websites, and there are several basic reasons your website doesn’t perform the way it is supposed to.

When anyone types in a topic into any of the search engines like google or bing, yahoo etc what happens is the website that pops up on top is usually the one that gets read, although the search engine will offer the person with X number of results, hardly does anyone ever look at the second page not to mention other pages.

So what you need to do is, in other words “ optimize” the way search engines like google look for and pull up websites. Most people are under the impression that the website that pops up first is the most reputed website! Well not true, it’s just the one that has been optimized the best.

Search engines look up or pull up websites based on a number of factors but some of the basics never change. These basics are the thumb rules of search engines like google, because there are always smart web developers etc who try to manipulate the search engine by pulling a few stunts which will bring their website to the top.

The thumb rules that google values the most are as follows.

First of all, the content on your website needs to be absolutely genuine, so for example if a paragraph has been copied from another website and pasted onto your website, google will view your website as a duplicate website and will rate it among the last websites on the internet.

The second thumb rule is that your content needs to have keywords; these key words help google to understand what your website is all about. Google has spiders or robots, like you may have seen in the movie Matrix these spiders constantly crawl all over the internet and pick up the new content it finds uploaded every day, then google analyses this content to ensure that it is genuine. When you setup keywords that relate to your content this helps google or any other search engine to easily categorize your website.

When your website is easily categorized for example let’s assume that your website is related to health, you need to put in key words like “health” “well being” “fitness” so on and so forth. Now whenever someone types in health or well being into google the search engine will automatically also pull up your website. Your keywords that you enter need to be relevant according to the content of the site.

While setting up keywords you need to be sure that the same key words are mentioned in your articles also in your website. If they are not, then google will rate the keywords as bogus and your website will fall to the bottom of the directory. There should be at least 0.6% to 1% usage of your keywords in your website for it to come up as nicely, and the number of keywords you can put in is unlimited.

How to Deal with the Tension between SEO and UX

SEO and UX are not very individualistic materials that cannot work in tandem. Two of them often share same space online and as an obvious outcome of that, often clash. Yet, they can coexist without much possibility of collision. Read on to know how that is possible.

The encounter of user experience and SEO has been a topic of discussion since very old times. There are a number of elements that have positive impact on user experience and the same on SEO too. UX touches on a lot many things that have direct bearing on search engine rankings too.

How to Deal with the Tension between SEO and UX

Effect on SEO & UX changes:

Spam is one important element that affects page ranks to a certain degree. Google can have a look at certain pages and say that the site fits in the spam template that Google has. Spam has a direct impact on links as user experience is involved in predicting whether someone can link to your site or not. If 1000 people already comes to your site that might result in 1 link per 1000 people. The result can be dramatically extended to 2 or 3 links every 1,000 people that can improve search engine ranking of your site.

Content these days has a huge effect of user experience depending on how search engines judge it. Google judges it more like the way users judge it.

User and usage data is also important. Technical issues like mobile friendliness of the site, page load speed are among the important UX elements that matter a lot in regulating the SERP.

Almost everything done on the site or a page that has a positive or negative impact on user experience can also have a corresponding effect on SEO with just a handful of exceptions. The exceptions lie in the areas in which we can find a huge amount of tension, challenges involved.

There are a number of tensions that exist between SEO and UX.

Page Consolidation versus segmentation:

The UX only web world can think of page consolidation. If you can imagine a world in which, there is no SEO process done, you can create a single landing page for users to gain information on different elements linked to a single topic.

If the user searches for information related to Spanish holidays, they might have queries about myriads of different things like the places to visit, the mode of transport, places to stay, etc. The site created in a UX only world can be compromised with just one landing page comprising all details about the information that the searcher might need.

However, in a SEO and UX world, the user cannot be happy with all information crammed in the space of a single page. The user would like to be redirected to the page that offers them information based on the result of his search keywords. Therefore, the SEO friendly site should have separate landing pages for different search terms (Different Topics). The site has to have pages linked properly, be keyword-targeted, indexable. The experience is a lot different from that of a UX only world. However, that is what a modern user looks for.

In the UX- friendly world, there is no need to think much about navigation as there is no need to go from one page to another. If you need information on a particular matter, you can go to a particular area of the site page that offers information on the same. If you seek information on the transport service available in Spain, there is no need to navigate to another page.

However, in the SEO and UX friendly search world, the site might have multiple navigation routes to different pages of the site. There is a need to have drop-downs, footers, extra sidebars for enabling effective navigation process. The links also require descriptive anchor texts as they are beneficial for the search engine and also for those people who make the search on a mobile device.

The discussion focuses on the elements that are necessary for quality user experience and SEO friendly search process. The site content must be placed in a manner that it helps the website rank higher pushing aside the tension or collision between user experience and SEO.

SEO with conversion optimization can give you the best results

Infographics and how-to guide for SEO conversion optimization. Organic SEO and conversion optimization can boost your sales revenue drastically.

Though conversion optimization is considered as the newest SEO technique in practice there is a basic difference that exists between conversion optimization and traditional search engine optimization process. Unlike SEO, conversion optimization is not a one shot attempt to improve your website ranking in the search engine.

Conversion optimization has proved to be an integral part of modern online marketing and is something more than optimizing a landing page or other traditional methods. Short cuts and black hat SEO techniques are never a solution for successful online marketing and often bring terrible and disastrous results.

But there are also some similarities between conversion optimization and traditional SEO techniques as both of them are data-driven. But conversion optimization digs deeper in the user behaviour and conducts a very crucial segmentation analysis on the interaction of different segments of visitors and helping you to optimize your website depending on those particular experiences. The other most attractive features of conversion optimization are

- Though some positive results of conversion optimization can be achieved with a paid search but the investment worth the last penny of it. In combination with PPC or Pay per Click search advertising and organic SEO, conversion optimization can allow scope for highly controlled experimentations.

- As blog post may be considered as the atomic unit for SEO experimentation, in case of conversion optimization it is the PPC with a perfect landing page that serves the purpose.

- Most of the time the functional area of conversion optimizes extends beyond a single page and the multi-step landing pages used in the process have the potential of generating a better conversion rate because it engages the respondents in a dialog that is mutually productive and allows scope for segmentation at the same time which are also known as ‘conversion paths’.

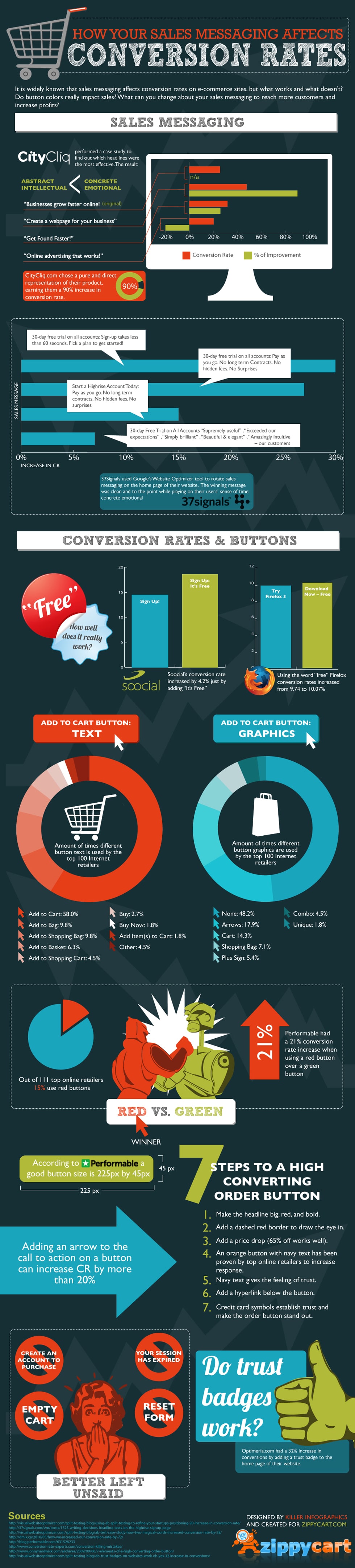

How Messaging and Design Affect Conversion Rates [Infographic]

The bottom line of conversion optimization is to effectively converting visitors into sales. It is actually the process of fine tuning a website so that your site achieves a lower bounce rate and you can make the visitors to do as you wish them.

Metrics and usability analysis, landing page and sales funnel optimization, multivariate and A/B Testing along with website optimization, maintenance and reporting Key Performance Indicators (KPI) are the common steps taken during a conversion optimization process.

It is not only the website owners who can avail the benefits of the conversion optimization process but bloggers can also use the method with equal success for the same result. The most important reason about making your search engine optimization process and conversion optimization work in tandem is that while SEO can ensure more traffic to your website it can not improve the conversion rate which can be quite successfully achieved by a proper conversion optimization strategy.

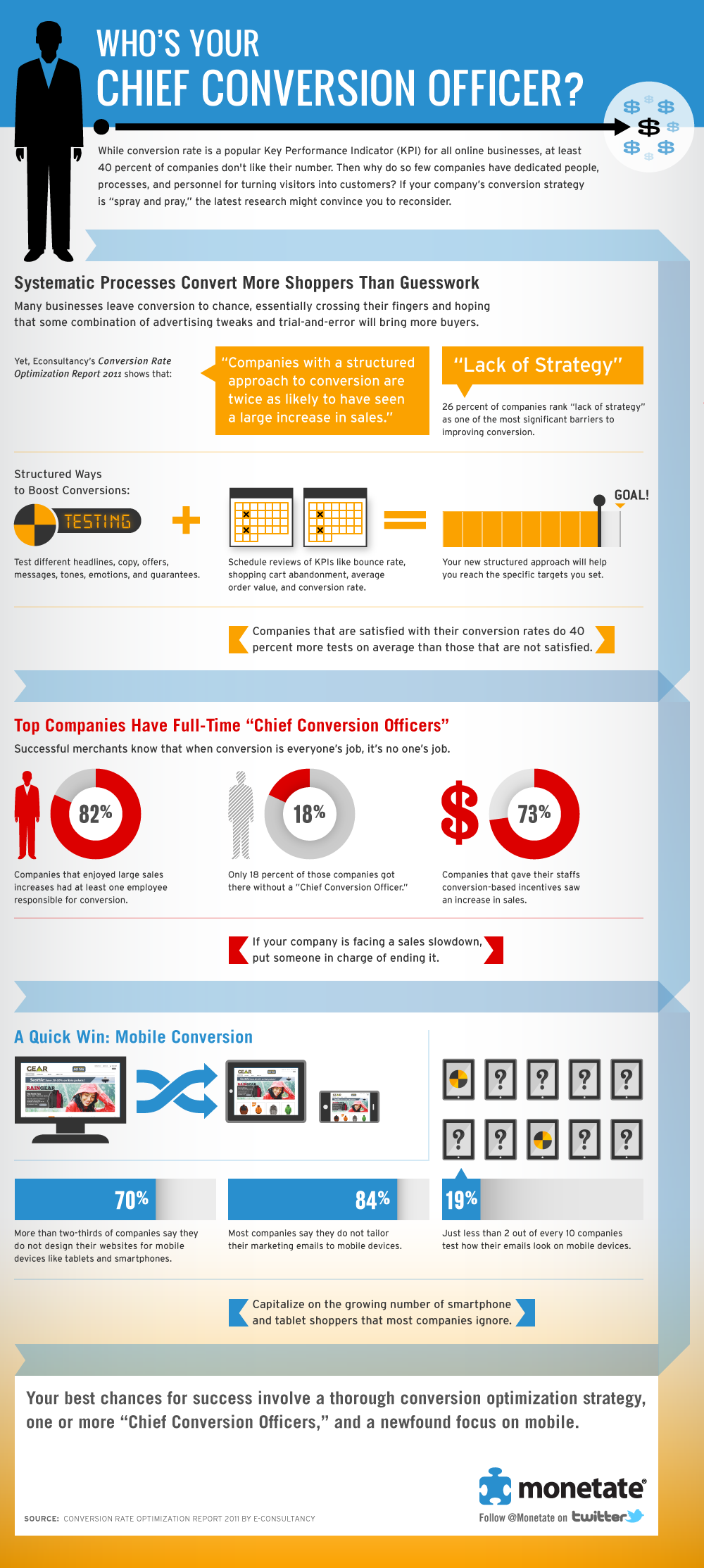

Infographic: Does your company need a chief conversion officer?

9 Steps to better conversion [Infographic]

Source: KissMetrics

Related reading: How to do conversion rate optimization

SEO Essentials for a Greenhorn

SEO Essentials – what you need to know? For some years now, I’m running the 7boats digital marketing academy. No, this post is not about plugging for my academy, but bear with me for a couple of essential lines before I jump into the topic! I started out with this academy because I had a lot of trouble finding experienced SEO professionals, among other digital marketing hands for my company. I harked back to my own days as a greenhorn where I picked up most of what I know today through self analysis and research, coupled with information and guidance of some distinguished seniors.

This state of affairs is particularly pronounced because you will scarcely find institutions teaching SEO. My academy is focussed on bridging this gap in the domain and dare I say, we have managed to do it successfully for years now. Writing from this experience, I’m compiling some SEO essentials for a greenhorn in this field. This post will not be about specifics and technical knowledge. For that kind of in-depth information, you have to check out my academy course material.

So, here goes what you need to know about the SEO essentials as a newbie SEO professional!

First things first, you need a thorough knowledge about the internet as a whole. Picking up jargon and online lingo is crucial. No matter where you learn the tricks of the trade, you will have to fall back on this knowledge of internet jargon, like HTTP, domains and roots, clients, servers, DNS, VPN, PageRank, Domain Authority and the like. This information bank will be helpful when you study for further SEO knowledge through online or offline tutorials. Even if you fly solo, you cannot always check back while reading articles on the subject. Knowing the jargons will facilitate the learning process.

Secondly, for SEO essentials, you have to understand that learning SEO is more practical than theoretical. That is how I have learned this profession. You have to make mistakes and learn from them. As a newbie, tinker with web development tools. Create a scratch website and try to optimize for that. In the process, you will learn what is there to know about domain hosting, etc. What’s more, you will pick up some additional, offline tricks that are not found in any book or tutorial! It is fascinating what you will learn when you work things out all by yourself!

Related reading: How to select right SEO friendly web hosting

SEO alone is of limited value unless you combine computer skills with that of negotiation, marketing and PR. As an SEO professional, you need to hard-sell your site’s requirements. For example, can you close the deal on a guest blog post at a blog which is many levels higher up the online pecking order? It takes a lot of conviction and negotiating prowess to achieve this. The same goes for link exchanges. SEO executives are often required to find out greener pastures for getting more exposure for their websites and blogs. You need a commanding idea about popular online channels and where you might find your target audience.

Finally, data crunching skills are a must for SEO professionals. In my training modules and work floor, I make special note of professionals with patient research skills. You don’t need to be a geek or a nerd to do this! You just need a nose for research and number crunching. Numbers speak more than you can imagine. You can develop precise strategies and targeted marketing if you are good with your numbers. You can also open up newer avenues and previously unknown doors by following the trail of numbers.

Other than these skills that are strictly by the book, there are others that require smart thinking and a flexible outlook. Handing out a penalized website to a newbie is a good way to test how they handle an outright hostile situation! After all, in your career in SEO, you will have clients coming to you with penalized sites. You need to know how you can work them out of a tight spot.

This business isn’t always about going forward. It is also about pulling out of the muck, dusting yourself and then embarking on the journey forward.

Measuring SEO Effectiveness

Learn how to measure SEO effectiveness. Important tips & facts on measuring progress of your SEO effort. How to track SEO ROI. Learn SEO benchmarks & KPI.